First-Hand:The Navy Codebreakers and Their Digital Computers - Chapter 2 of the Story of the Naval Tactical Data System

By David L. Boslaugh, Capt. USN, Retired

Much has been written about World War II British code breaking machines developed at the Government Code and Cypher School at Bletchley Park near London. For example N. Metropolis, J Howlett, and G Rota devote two articles in A History of Computing in the Twentieth Century to the history of British codebreaking machines, in particular the Colossus series which had from 2,500 to 3000 vacuum tubes and could do elementary add, subtract, multiply and divide functions. Some historians even call the Colossi the world’s first computers. In addition to numerous histories treating Bletchley Park, novels have been written and movies made about the activities there. On the other hand, Metropolis only mentions in passing the existence of the US Navy’s WW II counterpart, the US Naval Computing Machine Laboratory (NCML), at Dayton, Ohio. [Metropolis pp. vii-ix]

One of the few books (maybe the only) that mentions the wartime work at NCML states “By the time Japan surrendered, the Americans [NCML] were building electronic machines using twice as many tubes as the British Colossus.” [Burke, Colin, INFORMATION AND Secrecy - Vannevar Bush, Ultra, and the Other Memex, The Scarecrow Press, Inc., Lanham, Md, & London, 1994, ISBN 0-8108-2783-2, p. 310] Burke adds that the machines were were not true data processors even though they too could execute arithmetic instructions, and even some conditional branching instructions. What kept them from true general purpose, stored program digital computers was, of course, the lack of random access working memory to hold instructions and data.

This writer is sure that many other writers would have liked to write about these American codebreaking machines - if they had only known about them. It seems that it is the extremely stringent WW II navy security procedures surrounding the subject of cryptography that has led to a dearth of information on the USN projects. For example, when this writer interviewed persons who had been involved, they were fairly open about their work on post World War II codebreaking computers, but would go silent when asked about their WW II activities. It seems that a security officer’s admonition, “If you ever talk about your work here we can have you shot.” was very effective. Furthermore, the USN cryptologic community’s extreme compartmentation of work activities allowed a worker very little information about what he or she was really working on. Only a few people at the top knew how a project fit together.

Even my senior civilian department and branch heads, when I commanded the Naval Security Engineering Facility at the Naval Security Station in the early 1970s, volunteered very little information about their wartime codebreaking machine work. One of the few areas they talked about was their work in designing and building the ‘Purple’ machines used to break back the Japanese diplomatic code just before WW II. An army cryptologist William F. Friedman had broken back the diplomatic code, but a navy activity, the Navy Code and Signal Laboratory, at the Washington Navy Yard was tasked to build the machines, based on Friedman’s findings. They felt they could talk about the Purple machine because author David Khan had written about it extensively in his bookThe Code-Breakers, and if a subject area was openly published, and cleared by the National Security Agency, they had no qualms about discussing it. [Kahn, David, The Code-Breakers - The Story of Secret Writing, The MacMillan Co., Toronto, Ontario, 1969, Library of Congress card no. 63-16109,]

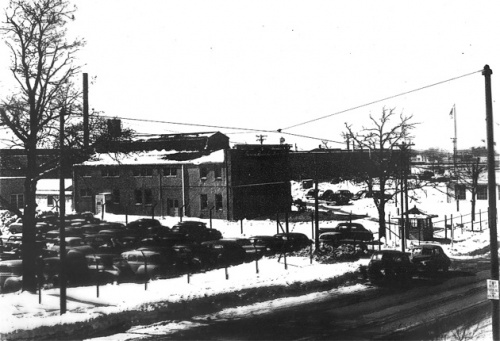

The navy had codebreaking machine design and production facilities at the Naval Computing Machine Laboratory, a Bureau of Ships field activity, located at the National Cash Register (NCR) plant in Dayton. However the codebreaking was actually done in the buildings of a former girl’s school, the Mount Vernon Seminary, located in northwestern Washington, D.C. The name given to the activity at the time was the non-informative ‘Communications Supplementary Activity, Washington’ (CSAW). (The name was later changed to the Naval Security Station.) Workers there usually called it ‘seesaw.’ The commanding officer of CSAW, CAPT Joseph N. Wenger, reported to the Director of Naval Communications in the Office of the Chief of Naval Operations (CNO) and his group of cryptanalysts were called the Communications Intelligence Section, with the code name OP-20-G. [Burke, pp. 53-54]

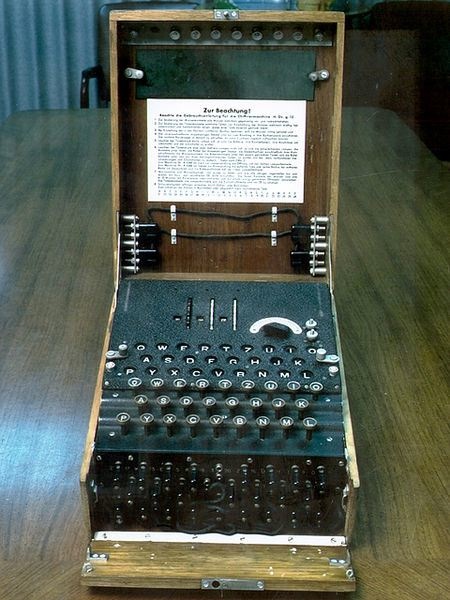

Seesaw’s main work was locating German submarines. This they did in two ways, first, most of the codebreakers were dedicated to breaking back high frequency radio transmissions to and from German submarines operating in the Atlantic. These transmissions were encrypted by German Enigma coding machines, and if they could be broken back gave valuable information regarding submarine locations and intentions. Secondly, CSAW was located on one the highest points in Washington, D.C. to enable its radio antennas to pick up radio transmissions relayed from the navy’s extensive network of high frequency direction finding stations located around the Atlantic basin. When one of these stations detected a transmission from a german sub they immediately radioed the time and bearing to CSAW where it could be triangulated with other intercepts of the same transmission, and a quick plot of the sub’s estimated position could be made on a large wall map of the Atlantic Ocean. The two sources of submarine location information, when correlated together, could give OP-20-G a good picture of submarine locations and activity, and enable patrol airplanes to be dispatched to the location, as well as to help steer convoys around danger areas.

In 1945, as World War II was winding down, the National Cash Register Co., as did other companies supporting codebreaking machine production, advised OP-20-G that they intended to get out of building the machines as soon as hostilities ceased. Furthermore, OP-20-G realized that the navy’s cadre of engineers and scientists at NCML and CSAW who designed and developed new codebreaking devices would want to return to their civilian pursuits as soon as possible. There was no way that military and civil service pay scales could keep them working for the navy. On the other side of the coin the CNO realized that the navy’s codebreaking load was not going to diminish at war’s end, it was only going to shift to target the new threat, the Soviet Union.

The situation called for unusual and desperate measures. The Secretary of the Navy approved CAPT Wenger’s proposal that the navy should support the establishment of a private for- profit company to do research, development, and building of advanced navy codebreaking machines. It was agreed that the new company would have to provide its own capital to finance startup and would get no special favors from the navy other than contracts for codebreaking devices. The company would be allowed to take on contracts from other sources, but its work for the Navy was to be kept top secret. It was also agreed that the personnel and equipment of the Navy Computing Machine Laboratory would be moved from Dayton and collocated with the new company to guide and oversee their work. [Burke, pp. 314-315]

By 1945 businessman / financier John E. Parker could see that his troop-carrying glider factory called Northwestern Aeronautical Corp., and located in St. Paul Minnesota, was soon going to be out of work. He needed new business, and through mutual friends was introduced to CAPT Wenger who convinced him to help finance the new company. Parker agreed with the proviso that the new company would continue to use his plant at 1902 West Minnehaha Ave. in St. Paul. The building was actually owned by the War Assets Administration, and leased by Parker. He, and his other investing partners decided to name the startup company Engineering Research Associates (ERA). By mid 1946 the twenty engineering duty officers and senior enlisted technicians of the NCML were in their new quarters on Minnehaha Ave., and ERA had hired 40 former CSAW technical staff.

[Tomash, Erwin and Cohen, Arnold A., ‘The Birth of an ERA; Engineering Research Associates, Inc. 1946-1955, American Federation of Information Processing Societies, Inc., Annals of the History of Computing, Vol. 1, No. 2, Oct. 1979, pp. 86-87]

[Snyder, Samuel s., Influence of U.S. Cryptologic Organizations on the Digital Computer Industry, National Security Agency, Fort George G. Meade, MD, May 1977, p. 5]

The navy codebreaking machines of 1946 were special purpose devices targeted against a specific encryption system. If the ‘enemy’ changed its encoding machine or introduced a new coding machine CSAW would have to physically modify their machines or, more likely, design and build a completely new codebreaking machine. The process was costly and time consuming. The holy grail sought by OP-20-G was a high speed general purpose machine that could be retargeted to new coding systems with minimum time and effort. Even though they were making incremental advances toward such a device, they weren’t quite sure how to achieve the grail. [Burke, p. 315] This time it would be the U.S. Army Ballistics Research Laboratory at the Aberdeen Proving Grounds in company with the University of Pennsylvania that pointed the way to the next step.

ENIAC and EDVAC

ENIAC

It seems that necessity is truly the mother of invention. There had been a latent demand for high speed automatic computing devices long before digital computers existed. One of these demands, the range tables needed to properly use a piece of artillery, finally sparked the breakthrough that led to the development of the general purpose digital computer. A range table shows how far a gun projectile will travel, before impact, for given angles of gun elevation, as modified by projectile weight, powder charge, and other variables. Here is how it happened.

First of all, it would be very wasteful, and impractical, to develop a set of range tables for a new gun design from test firings. There would have to be so many test firings that it would take too long to develop a set of tables, and the manpower and ammunition used would be unacceptable. In the beginning range tables were prepared by human “computers” using mechanical desk calculators in a step-by-step integration process, the results of which would be occasionally verified by a live firing. The problem was, it took about twenty hours for a human to calculate one trajectory with a desk calculator, and each new gun needed about 500 trajectories in its range table. The manually calculated range table process for one new gun design took about a month. By early 1943 new guns were being delivered to the European theater without complete range tables. [McCartney, Scott, ENIAC - The Triumphs and Tragedies of the World’s First Computer, Walker and Company, New York, 1999, <a href="/wiki/index.php?title=Special:Booksources&isbn=0802713483">ISBN 0-8027-1348-3</a>, pp 53-54, p 101]

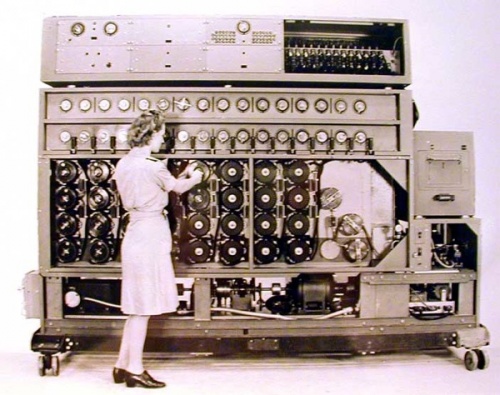

Soon after the beginning of World War II, the Moore School of Engineering at the University of Pennsylvania had entered into a contract with the Ballistics Research Laboratory at the nearby Army Aberdeen Proving Ground, to prepare ballistic range tables for new army gun designs. The school owned two mechanical differential analyzers, patterned after the differential analyzer invented by Dr. Vannevar Bush of MIT in 1930. The differential analyzers could be set up to compute range tables, and both were pressed into such service. The analyzer could compute one trajectory in about 15 minutes, but it took hours to reset the analyzer to compute the next trajectory. The analyzers could not carry the entire computation load, and teams of women mathematician “computers” worked in parallel with the analyzers preparing range tables. As we have noted, by early 1943, the Moore School was falling behind its contractual commitment to deliver range tables. [Metropolis, N., Howlett, J., and Rota, Gian-Carlo, A History of Computing in the Twentieth Century, Academic Press, Inc., 1980, <a href="/wiki/index.php?title=Special:Booksources&isbn=0124916503">ISBN 0-12-491650-3</a>, p 314] [McCartney, p 101]

Next came automatic digital calculators, invented by Mr. George R. Stibitz of Bell Telephone Laboratories, based on electromechanical relays used as binary logic elements. The required sequence of mathematical operations was fed into the relay calculators from punched paper tape, and even though the calculators used very large numbers of relays, they were found to be very reliable. Often their paper tape program was started in the evening, and they ran unsupervised all night, with new trajectory calculations ready next morning. One relay calculator could do the work of about 35 human computers; nevertheless the combination of relay calculators and mechanical differential analyzers was still not equal to the task, and human computers were still needed. [Metropolis, pp 479-483]

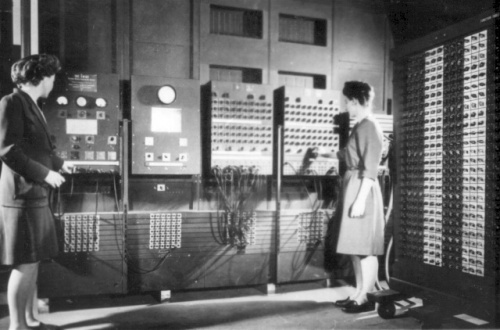

The duties of Dr. John w. Mauchly, who had joined the Moore School faculty in the fall of 1941, included supervision of the teams of human computers who were preparing firing tables, wherein he devised the computational steps in their computing processes. Mauchly had long been interested in using electronic vacuum tube circuits as high speed computing elements. He envisioned an electronic calculator as a way to greatly speed up preparation of range tables, and in 1942, he and Presper J. Eckert, an electronics engineer in the employ of the Moore School, wrote a description and proposal for an electronic computer for calculating range tables. At first, the two engineers called the machine an electronic differential analyzer because they knew that army officials trusted the mechanical differential analyzers, and they did not want to appear to be stretching new technology too far. Later they named it the Electronic Numerical Integrator and Computer, which gave the acronym “ENIAC.” Nevertheless, they strove to make the device as general purpose as possible within the cost limitations of available high speed electronic “memory” based on vacuum tube circuits. [Mauchly, John W., “Mauchly on the trials of building ENIAC,” IEEE Spectrum, Vol. 12, No. 4, Apr. 1975, pp 70-76] [Metropolis, pp 311-344]

The Army approved the Eckert-Mauchly proposal in early April 1943, and the two engineers began an intensive design effort, the first challenge of which was to fully exploit the speed of vacuum tubes by devising electronic counting circuits that could reliably operate at 100,000 input pulses per second. Instead of using binary logic circuits, they elected to use ten digit ring counters as being easier to understand when programming and troubleshooting the system. They devised 25 specialized computing units that operated in parallel with each other under the control of a master programming unit. The machine also had 20 arithmetic accumulators, three function table units for inputting preprogrammed functions, a high speed multiplier, a square rooter, a divider, a constant transmitter for input and an output printer. All units operated in synchronism by pulses from a master clock. Programming was accomplished by prewiring programming units with cables manually inserted in plug boards. [Metropolis, p 314]

ENIAC was ready to run its first program in December 1945. It used 17,468 vacuum tubes, and occupied a 50 by 30 foot room at the Moore School. It could perform perform 5,000 addition cycles per second. The war was over and the need for ballistic tables diminished, so the first program was a nuclear weapons calculation for the Los Alamos Laboratory which it completed in February 1946. [Metropolis p 334] [McCartney pp 101-102]

EDVAC

While working on ENIAC, Eckert and Mauchly realized that they could make a computing machine much more capable and general purpose than the ENIAC if there was a way to store program instructions, constants, and results in a large memory that could work at vacuum tube switching speeds. ENIAC had a limited amount of vacuum tube ring counter storage that could work at electronic speeds; which was used for temporary storage of computing results. However construction of a high speed memory of the size they envisioned out of vacuum tubes would be inordinately expensive, not to mention the room needed to house such a memory and the power needed to light up all their filaments.

Fortuitously, in his wartime work on moving target detectors for radars, Eckert had devised a method of storing strings of radar pulses as acoustic pulses traveling through a tube of mercury. He realized that if an electronic digital computer were designed to use binary logic wherein the only numbers are ones and zeros, he could store streams of binary numbers in such a “mercury delay line.” Circuits could also be built that could indefinitely refresh the number strings circulating in the lines to form a working high speed computer memory. By midsummer 1944 Eckert and Mauchly had prepared a preliminary design of a general purpose computer that could store instructions and data in a memory fast enough to work with an electronic central processing unit. [Mauchly, 75]

The two inventors presented their ideas to Lieutenant Herman H. Goldstine, their liaison officer who represented the Army Ballistics Research Lab, and he supported them. In late August 1944 the Lab issued the Moore School a contract to flesh out the design of the new general purpose computer and begin construction. They decided to call the machine Electronic Discrete Variable Automatic Computer (EDVAC). Goldstine also arranged for the renowned Princeton University mathematician Dr. John L. von Neumann to support them as a consultant. Von Neumann was regarded as one of the world’s leading experts in numerical mathematical analysis, who among other accomplishments had done critical calculations on the design of the first U.S. atomic bomb. [Goldstine, Herman H., The Computer from Pascal to von Neumann, Princeton University Press, Princeton, NJ, 1972, <a href="/wiki/index.php?title=Special:Booksources&isbn=0691023670">ISBN 0-691-02367-0</a>, pp 185-87]

Instead of a number of computing units operating in parallel, as in ENIAC, the three devised an architecture that would use only one high speed arithmetic unit which could add, subtract, multiply and divide as well as a number of other arithmetic functions such as finding square roots, converting between binary and decimal, and shift and compare functions. The arithmetic unit, as well as all other elements of the machine would taker orders from a control unit which would read instructions sequentially from memory and decode them for action. The control unit would read instructions sequentially from memory unless the result of a previous operation called for a branch to some other part of the instruction sequence (the program). All operations would be done in serial, executing one instruction at a time.

Other elements of the architecture included the delay line memory with its refresh and control circuits, and an in put-output unit which could read in programs or data from punched paper tape, punched cards, and later from magnetic tape. The input-output unit could also send computation results to printers, tape units or card punches. The memory used 126 mercury delay lines which could store 1,024 forty four-bit binary numbers. Because of the high speed serial binary logic, EDVAC used only 3,500 vacuum tubes as compared to the roughly 18,000 tubes in ENIAC. [Tomkins, C. B., Wakelin, J. H., and Stifler, W. W., Jr., of the Staff of Engineering Research associates, Inc., High Speed Computing Devices, McGraw-Hill Book Co., Inc., New York, 1950, pp 200-201] [Goldstine, p 204]

In addition to his contributions to EDVAC design, Dr von Neumann kept copious notes as the design progressed. In june 1945, von Neumann condensed his notes to a 101 page working document, titled First Draft of a Report on the EDVAC, which he had reproduced and distributed to 24 people working on the EDVAC project. The project was classified as Army Confidential, so to make the working document easier to use he wrote the paper at level of abstraction which army officials said would not need security classification. This meant the report could be circulated outside the project, and many requests for a copy were received, culminating in wide distribution among academia and industry. It became the seminal document describing the general purpose stored program digital computer and, because von Neumann’s name was the only name on the title page, the design became known as the von Neumann computer architecture, and, much to the chagrin of Eckert, Mauchly, and others working on the project, history seems to credit von Neumann as the inventor of the modern digital computer. [Goldstine pp 196-198]

By 1950 EDVAC had been installed at the Army’s Aberdeen, MD, Ballistic Research Laboratory, and was in testing. [Tomkins, p 201] In the mean time, in March 1946, Eckert and Mauchly had resigned from the Moore School of Engineering because of a dispute with the School over the ownership of ENIAC patents. The two formed their own company called Electronic Control Company, with the intention of manufacturing and selling commercial digital computers. We will hear more of Eckert and Mauchly later. [Stern, Nancy, “From ENIAC to UNIVAC”, IEEE Spectrum, Vol. 18, No. 12, Dec. 1981, pp 61-65]

WHIRLWIND

Before we tell the story of the U.S. Navy’s first codebreaking computers we need to review two other early computer development projects that would have a strong influence on the codebreaking computers and on the Navy’s first seagoing tactical digital computer systems. The first of these projects began life as a navy project, but ended up as a U.S. Air Force project culminating in the physically largest digital computers ever built.

In 1943 Commander Luis de Florez, head of the Special Devices Division of the Navy’s Bureau of Aeronautics, wished to simulate the dynamics and pilot handling qualities of newly designed aircraft without actually building and flying the airplane. What he had in mind was a highly sophisticated version of the venerable Link blind flying trainer in which a pilot could man a simulated airplane cockpit and experience airplane flight responses as if he were flying the real airplane. He envisioned a large electronic analog computer, programmed with airplane stability derivatives obtained from wind tunnel testing of models of the aircraft to be simulated, which would compute the craft’s path through the air, its velocity, its lateral and vertical translations and its motions in roll, pitch and yaw. The computer would even move the cockpit by sending signals to mechanical servomechanisms.

Florez contracted with the Servomechanisms Laboratory of the Massachusetts Institute of Technology in November 1944 to build his ‘Aircraft Stability and Control Analyzer’ which was named “Device 2-K.” Twenty six year old electrical engineer Jay Forrester was selected to head the project, and deliver the device by March 1947, but by the fall of 1945 he was dissatisfied with the design progress of the very complex analog computer. [Redmond, Kent C. and Smith, Thomas M., “Lessons from ‘Project Whirlwind’,” IEEE Spectrum, Vol. 14, No. 10 Oct. 1977, pp 51-52]

In October 1945 Forrester attended a conference on advanced computation techniques given on the MIT campus. Here he learned about the Moore School’s ENIAC computer, and became convinced that a digital computer could be simpler and more reliable than the massive analog computer envisioned for the 2-K flight simulator. When Forrester returned from the conference he told his engineers, “We are no longer building an analog computer; we are building a digital computer. His seniors at the Servomechanisms Lab supported his decision and endorsed his January 1946 proposal to the Special Devices Division of the Naval Office of Research and Invention which had inherited the 2-K project from the Bureau of Aeronautics. By March 1946 the flight simulator project was of diminishing interest to the Navy, but the Office of Research and Invention did approve development of the computer. It was to be completed at a cost of $1.2 million and delivered by June 1948. [Pugh, Emerson W., Memories That Shaped an Industry - Decisions Leading to the IBM System/360, The MIT Press, Cambridge, MA, 1984, ISBN 0-262-16094-3, pp 63-64, [Metropolis, N, Howlett, j. and Rota, Gian-Carlo, A History of Computing in the Twentieth Century, Academic Press, Inc., Orlando, FL, 1980, ISBN 0-12-491650-3, p 365 ]

The Navy named the project ‘Whirlwind’, and it was to be a very fast machine capable of controlling processes, such as the flight simulator, in ‘real time.’ Because of the speed requirement Forrester and his staff chose to use parallel logic in numerical operations wherein each bit in a word is processed simultaneously rather than serial logic where the bits in a computer word are processed one after the other. Furthermore, the parallel logic needed a memory capable of working in synchronism with the fast logic. After investigating the EDVAC computer’s mercury delay line memory and the rotating magnetic drum memory being made by a new company named Engineering Research Associates, Forrester concluded that neither was fast enough for WHIRLWIND. In February 1946, he investigated various computer development projects that were planning to use ‘electrostatic storage tubes’ as computer memory. These tubes used charged spots on the face of a cathode ray tube as a means of storing binary information. He found that though they were large in volume and had limited storage, they could operate at electronic vacuum tube speeds by virtue of being electronic devices. He reluctantly decided to start development using electrostatic storage, but would continue looking for other high speed memory. [Pugh, pp 66-67]

By early 1947 Forrester’s group had completed WHIRLWIND’s logical design, and the group started building and testing the machine incrementally - section by section. By 1949 the central processing unit was working with a small electrostatic storage unit, by 1950 a row of storage tubes were working with the CPU. [Metropolis p 367, p 372]

The Vinson Bill of 1945 directed the Navy to reorganize its management of research and development projects from a wartime to a peacetime footing, and by mid 1946 the Office of Research and Invention had been subsumed by the newly created Office of Naval Research. WHIRLWIND was inherited by ONR’s Mathematics Branch which was primarily interested in developing computers to perform mathematical analysis and scientific calculations. The Mathematics Branch could see little need for a fast process control computer. Furthermore, they were appalled when MIT requested funding in the amount of more than $1.8 million to cover WHIRLWIND development from 1 July 1948 to 30 September 1949. This was more than twice what they had budgeted. By mid 1949 ONR was seriously considering terminating the WHIRLWIND project. [Redmond, pp 56-58]

The U.S. Air Force SAGE System

A Continental Air Defense Dilemma

The Soviet Union’s successful atomic bomb test of August 1949 would prove to be WHIRLWIND’s salvation. It brought home to General Hoyt Vandenberg, the U.S. Air Force Chief of Staff, the vulnerability of the United States to attack by nuclear armed Soviet bombers, causing his Vice Chief of Staff to convene a meeting of the Air Force’s Scientific Advisory Board to advise on improving the continental air defense system to cope with the new threat. Board member George E. Valley, Jr., a MIT professor of physics, proposed that the Scientific Advisory Board be charged with setting up an ‘Air Defense Committee’ made up of technical experts from the boards many panels. The Committee would first conduct an investigation of the present air defense system and then recommend technical improvements. Valley also proposed that the Committee should not work in abstract isolation, but should have ground radar sites, as well as a squadron of interceptor aircraft at its disposal. General Vandenberg did not take long in approving the proposal, and by mid December 1949 the ‘Air Defense System Engineering Committee’ (ADSEC) was in existence; chaired by George Valley. [Redmond, Kent C., and Smith, Thomas M., From WHIRLWIND to MITRE - the R&D Story of the SAGE Air Defense Computer, The MIT Press, Cambridge, MA, 2000, ISBN 0-262-18201,-7, p 19, p22]

One of ASDEC’s earliest findings was that the newly established Air Force not only did not have research and development projects investigating improvements to air defense, but also did not have an organization dedicated to managing research and development. Such minimal R&D as was done, was under the Air Material Command whose main thrust was procurement and supply matters. In response, the USAF created the Air Research and Development Command on 23 January 1950. [Redmond 2, p 27]

The Committee also found that the manual radar data plotting procedures and voice radio or telephone communications for transmitting radar target track data at the USAF fighter direction facilities lacked both the capacity and speed to keep up with the capabilities of the jet propelled interceptors they were directing as well as the speed of the expected Soviet jet bombers. (The USAF was having the very same difficulties as being experienced by the Navy in handling fleet air defense radar data.) The Committee proposed that each facility should have automated radar data analyzers, and that the data analyzers should be tied to centralized command facilities by high speed data lines. Furthermore the centralized facilities should have fairly powerful data analyzers to assimilate the radar data and provide recommendations for the direction of interceptor aircraft and antiaircraft batteries. It took no stretch of the imagination to further propose that the data analyzers should be based on newly emerging general purpose digital computers. Valley did admit that he had only the, “haziest notions were had of what the central computer would be like or what it would actually have to do.” [Redmond 2, p 27- 28] Little did he realize that the solution was taking shape right on his own campus.

In early January 1950 George Valley had a chance discussion with MIT colleague Jerome Wiesner who was an administrator at MIT’s Research Laboratory for Electronics. Valley happened to commiserate to Wiesner his difficulties in finding anyone who could shed light on the large data processor he needed for his conceptual air defense information gathering and correlation center. Wiesner arranged for Valley to meet an engineer who was working on what could possibly be the solution; namely Jay Forrester and his financially challenged WHIRLWIND project.

We Can Do it with whirlwind

Forrester and some of his key engineers were invited to ADSEC meetings where more details of the radar data handling problem were laid out and the WHIRLWIND engineers were queried as to what would have to be done to enable the computer to assimilate and process radar data. They jointly worked out research project details with engineers from the Air Force’s nearby Cambridge Research Laboratory which was already engaged in studies of digitizing radar data and transmitting it in digital format over phone lines. By March 1950 the USAF allocated $300,000 for joint use by the Cambridge Lab and the WHIRLWIND project for radar data handling studies. The Cambridge lab was to be in charge of overall design, development, and testing of the new air defense system, and MIT was to provide the prototype central computer as well as computational and computer programming support. WHIRLWIND was still alive. [Redmond 2, pp 34-35]

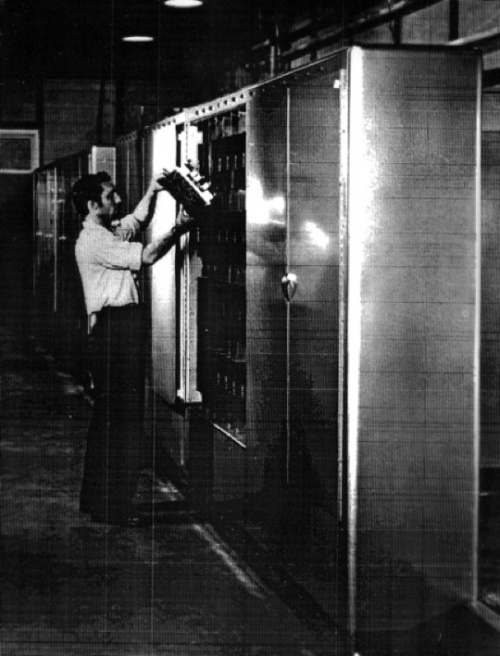

By late March 1951 construction and testing of the WHIRLWIND computer was essentially complete and it was up and running with electrostatic memory approximately 35 hours per week. It occupied 2,500 square feet and used 5000 vacuum tubes as well as 11,000 crystal rectifiers. For terminal equipment it used automatic typewriters (Fleowriters) for human inputs, paper tape readers and punches, two rotating magnetic drums for short term storage, five magnetic tape units, and prototype operator displays for viewing the computer generated radar picture. The displays, called characteron tubes, could generate various types of symbols and place them on the screens under computer command. Operators could identify a specific target for computer attention by placing a ‘light gun’ over a computer generated blip. The light gun sensed computer refreshment of the blip and thus the computer would know that the target of interest was the one it had just refreshed. [Pugh, p 74] [Redmond 1, p 57]

WHIRLWIND was also capable of receiving radar data from a prototype early warning radar and was programed to compute interceptor vectors to bring a controlled interceptor into a collision course interception of a target airplane, both of which were being tracked by the radar. On 20 April 1951 it successfully directed three live intercepts. [Redmond 2, p 1] The next step was to expand system capability from tracking just one target and controlling just one interceptor to simultaneously tracking a number of targets and interceptors by processing inputs from a number of radars. Whereas the WHIRLWIND computer was located in the Barta Building on the MIT campus; a complete experimental air defense system needed numerous radars of various types, airfields from which interceptors could be deployed, and communication links to and from the radars and airfields to the ‘direction center’ where WHIRLWIND was located. The resulting system was called the Cape Cod Experimental Air Defense Sector, and it included a number of radars on Cape Cod as well as radars on offshore islands and and other radars on the mainland, some of which were more than 100 miles from the direction center. It also included Otis Air Force Base on Cape Cod and Bedford AFB. [Metropolis, p377] The Air Force would later call the network of air defense sectors the Semi Automatic Ground Environment or “SAGE.”

With the experimental system the USAF and MIT proved out the SAGE concepts. They showed that radar data could be transmitted to the computer from numerous sites, that the computer could assess simulated hostile targets and rank them in a priority order of engagement, that it could recommend assignment of targets to appropriate missile batteries or interceptors, and that it could compute interceptor vectors to guide the interceptors to their targets. On 6 May 1963 the Air Force announced that it had sufficient confidence in the SAGE system progress that it was canceling a competing continental air defense system R&D program effort at the University of Michigan’s Willow Run Research Center, and from then on all USAF effort would go in to building out a prototype SAGE system. [Redmond 2, p 269]

The search for a Better Memory

It is appropriate at this time to drop back to the year 1946 when Jay Forrester reluctantly decided to use electrostatic storage tubes for WHIRLWIND’s high speed internal storage; for want of any better technology. (It should be noted that in 1946 computer engineers had not yet coined the term ‘memory’- they called it internal storage.) [Redmond 2, p 50] Even after the decision, Forrester continued to look for a better storage technology, not realizing that he was going to have to invent it himself. Forrester calculated that for real time process control, such as the 2-K flight simulator internal storage needed to be able to respond to a data query in about six microseconds, whereas electrostatic storage had an ‘access time’ on average of about 25 microseconds , and at very best could respond in about ten microseconds. He also wanted a storage medium much more reliable than the electrostatic storage tubes. [Redmond 1. p 54]

Forrester also had another goal for an ideal storage medium. Electrostatic storage tubes were bulky, and only the surface of the tube stored data in a two-dimensional array of charged spots, whereas his ideal medium should store data in a more compact three-dimensional array where any storage element in the array (one bit of data) could be defined by three wires, one wire for each dimension. After experimenting with various nonlinear potential storage devices such as gas glow discharge cells he found that though some media worked some of the time, he could not get consistent results. That is until he learned about a magnetic material called ‘Deltamax’ in the spring of 1949 which seemed to have a well defined repeatable hysteresis loop when switched from one magnetic state to another. He wrapped strips of Deltamax around small ceramic toroids and wired them into a two dimensional array with wires passing at right angles through each toroid in the array. He found that by sending current pulses simultaneously through the two wires defining one toroid in the array he could switch the magnetic state of that element without switching any of the other elements. He also found that by weaving a sense wire back and forth through every element in the two dimensional array he could determine the magnetic state that had been previously set into the element by the direction of the current induced in the sense wire. [Pugh, pp 66-76]

Satisfied that he potentially had the technical basis for a workable three dimensional digital computer storage medium, Forrester, in late 1950, assigned graduate student William L. Papian the task of further research into magnetic core storage, and building a demonstration three dimensional memory. By May 1952, Papian had devised a working 16-by-16 two dimensional array with very short switching times, sometimes as short a one microsecond. By May 1953 Papian and his assistants had built a 32-by-32-by-16 three dimensional array which was working very reliably with a small memory test computer. In August 19563, Forrester directed that the three dimensional magnetic core storage array should be wired into the WHIRLWIND computer for testing. They found that memory cycle time dropped from an average of 25 microseconds with electrostatic storage tubes to nine microseconds on average with magnetic core storage. [Redmond 1, pp 54-55] Even though others were simultaneously investigating various approaches to magnetic core computer storage, computer historians generally credit Forrester as the inventor because he got there first with working magnetic core storage.

The Biggest Computer Known to Mankind

One of Jay Forrester’s tasks under the MIT contract with the Air Force was preparation of the specifications for production SAGE computers, and then acquisition of two prototypes. The MIT acquisition task included selecting the computer builder. The specifications called for machines of basic WHIRLWIND architecture, two magnetic core main memories in each machine, and forced air cooling of the more than 25,000 vacuum tubes in each machine. Each main memory would contain more than 130,000 magnetic cores in a three dimensional array. Two computers would be installed at each of 25 SAGE sites. Forrester and his staff developed a list of approximately 20 candidate companies and then by process of elimination pared the list to five: Bell Laboratories, Remington Rand Univac, IBM, Radio Corporation of America, and Raytheon. RCA and Bell Labs opted out because of other workload, leaving three companies for Forrester to visit and interview in the summer of 1952. [Pugh, pp 93-100]

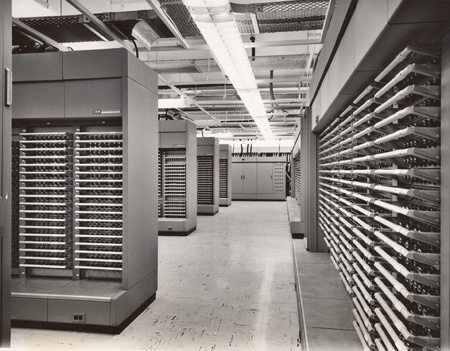

On 27 october 1952 MIT issued a subcontract to IBM to build two prototype SAGE computers in close collaboration with Forrester and his engineering staff. The machines were given the military designation AN/FSQ-7. The first of the prototypes was to be delivered to Lexington, MA, in 1955 to replace WHIRLWIND. [Redmond 2.p 192, p 248] In February 1954 the Air Force issued a production contract to IBM for the first run of AN/FSQ-7 computers. To enable round the clock operation, one of the two computers at each SAGE site would be switched to the radar inputs, display consoles and other peripheral equipment as the ‘operating’ computer, while the second machine would be under maintenance, or in a ‘hot standby’ condition. If the operating machine experienced a failure, the standby machine could be rapidly switched into the system to carry the load. AN/FSQ-7 computer production amounted to almost one half of IBM’s computer sales until the late 1950s. [Watson, Thomas J, Jr., and Petre, Peter, Father Son & Co. - My Life at IBM and Beyond, Bantam Books, New York, 1990, pp 232-233] [Pugh, pp 124-127]

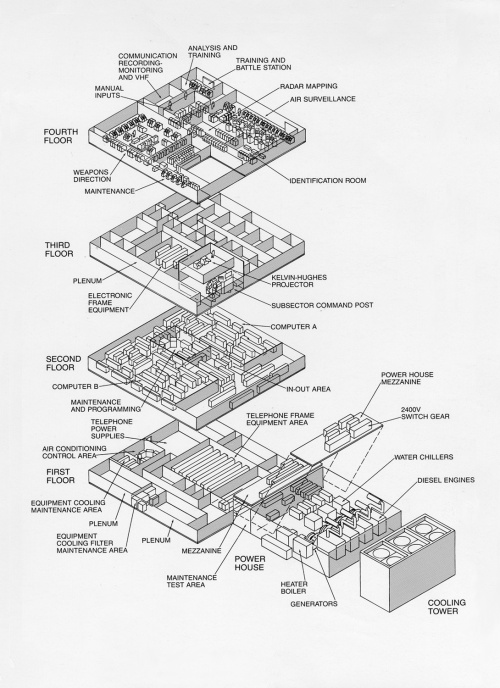

The first SAGE Air Defense Site went operational at McGuire AFB in July 1958, and the last site became operational three and one half years later at Sioux City, Iowa on 15 December 1961. The SAGE computers were housed in large windowless steel reinforced concrete buildings at air force bases around the United States. The buildings occupied about one acre of ground, and one of the four stories was filled with the two computers which took up 40,000 square feet of floor space and used 3 million watts of electrical power most of which either consumed by vacuum tube filaments or ran massive air conditioners to cool the filaments. The AN/FSQ-7 computers were thus the physically largest computers ever built. The combination of two computers at each site gave the sites an average up time of over 97%. The SAGE system remained in operation until February 1984. [Redmond 2, p 431] [Pugh, p 126] [Bell, Gwen, “Digging for Computer ‘Gold’,” IEEE Spectrum, Vol. 22, No. 12, Dec 1985, pp. 56-57] [Watson, pp 272-273]

When we left our story of the navy codebreakers, they were searching for a more flexible codebreaking machine; something that did not have to be redesigned and rebuilt to target new encryption systems. It turns out that the University of Pennsylvania would give the navy codebreakers a big boost in their quest when the University’s Moore School of Electrical Engineering sponsored a series of lectures in July and August of 1946 called Theory and Techniques for Design of Electronic Digital Computers based on their construction of the ENIAC and EDVAC computers. Captain Joseph N. Wenger, Commanding officer of the navy codebreaker’s headquarters facility, Communications Supplementary Activity, Washington, (CSAW) had been advised of the lectures and directed Lieutenant Commander James T. Pendergrass to attend; with the special direction to evaluate the new general purpose, stored program computer architecture for codebreaking use.

Pendergrass returned to CSAW highly enthused. He felt the EDVAC architecture could form the basis for the general purpose codebreaking machine the Navy sought. Instead of changing hardware to attack new encoding systems, a new computer program could be written and loaded into the general purpose machine. He even brought back samples of computer programs. After review of Pendergrass’ enthusiastic report, Capt Wenger directed CSAW’s engineering staff to prepare a technical specification for a new codebreaking computer. CSAW did not do its own contracting for codebreaking devices, instead the CSAW engineers relied on the Special Applications Branch of the Electronics Design and Development Division of the Bureau of Ships in Washington, D.C.

The Naval Computing Machine Laboratory (NCML), now collocated with the Engineering Research Associates plant on Minnehaha Ave. in St. Paul Minnesota, was actually a field activity of the Bureau of Ships (BuShips). The Bureau’s Special Applications Branch, which had its Computer Design Section physically located in the CSAW facilities on Nebraska Avenue in Washington, D.C., issued a twofold task to the Computing Machine Laboratory. The first half of the task was for NCML to work up the preliminary design of a powerful new general purpose, stored program computer to be used for codebreaking. The second half was to closely monitor the growing capabilities of Engineering Research Associates and to advise the Bureau of Ships if, and when, ERA would be capable of doing the detailed design and construction of the new computer. [Snyder, Samuel S., Influence of U.S. Cryptologic Organizations on the Digital Computer Industry, National Security Agency, Fort George G. Meade, MD, May 1977, p 6]

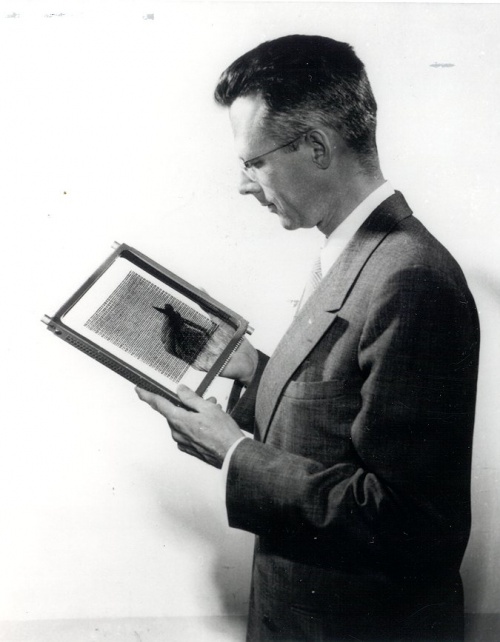

The Computing Machine Lab personnel did closely monitor ERA activities and capabilities, and in August 1947 they reported to BuShips that ERA was indeed ready to take on detailed design and construction of the codebreaking computer. In response, the Special Applications Branch awarded a contract to ERA given the sequential number “Task 13”, to begin design. The Navy also assigned a code name for each codebreaking device task and in this case they came up with the designation “Atlas”, named after a character in the contemporary comic strip ‘Barnaby’; Atlas in the strip having phenomenal mental capabilities. [Hakala Associates, Inc., St. Paul, Minn., Engineering Research Associates - The Wellspring of Minnesota’s Computer Industry, Communications Department, Sperry Corp., 1968 p 7] [Snyder, p 8] The CO of the Computing Machine Lab assigned leadership of the Atlas project to the Lab’s Technical Officer, Lieutenant Commander Edward C. Svendsen. We have seen him once previously in this narrative; in the group photo of graduates of the Navy’s first radar course for naval officers given at the Naval Research Laboratory.

Lieutenant Commander Edward C. Svendsen

Edward Svendsen was also a Minnesota native and, for one year, had studied electrical engineering at the University of Minnesota before gaining an appointment to the U.S. Naval Academy, where among other things he played first string on the Academy football team. He graduated from the Academy in June 1941, and was assigned to the battleship Mississippi as assistant navigator. Soon after Svendsen’s arrival on board, the ship was advised that Mississippi was to have an SC air search radar installed during a forthcoming shipyard availability, and was directed to select a junior officer to attend a course in radar at the Naval Research Laboratory in preparation for his assignment as the ship’s radar officer. Because of his one-year study of electrical engineering, and his qualification as an amateur radio operator, Svendsen was selected to attend. He completed the course in October 1941 and returned to his ship which by that time was off Iceland on convoy escort duty.

A few days after the Japanese attack on Pearl Harbor, Mississippi was ordered to join the Pacific fleet after first transiting the Panama Canal and reporting to San Francisco Naval Shipyard where she would receive her SC radar installation. To gain practical experience, Svendsen and his enlisted men helped shipyard workers install the radar and check it out. For the next three years on Pacific combat duty, Svendsen remained as ship’s radar officer. During that time, the Navy had been formulating the concept of shipboard combat information centers to better use the data supplied by the new radars, and Svendsen was reassigned as the ship’s first combat information center officer. The ship made a suitable space available for him, but it was up to Svendsen to work out the required equipage, layout, and radio and telephone communication circuits. Then it was up to him and his enlisted personnel to acquire the needed equipment, much of which he and his men ‘scrounged’ whenever they were in a shipyard, repair, or supply facility.

By the fall of 1944, Svendsen had risen from Ensign to Lieutenant Commander, and the Bureau of Naval Personnel, noting his previous electrical engineering studies and his extensive practical experience in electronics, ordered him to a three-year course of study in electrical engineering at the U.S. Naval Postgraduate School at Annapolis, MD. Upon graduation with a master’s degree in 1947, he was designated a naval engineering duty officer, and received orders to the U.S. Naval Computing Machine Laboratory at St. Paul, Minnesota. Here he would shift his area of specialty from radar to the top secret, arcane world of building codebreaking computers. [Norberg, Arthur L., An Interview with Edward C. Svendsen, OH 121, Charles Babbage Institute, The Center for the History of Information Processing, University of Minnesota, Minneapolis, Sept. 16, 1986]

Building Atlas

It will be recalled that the WHIRLWIND computer began life as a navy project. As soon as the Special Applications Branch began work on the preliminary specifications for what would become the Atlas computer, they obtained permission from the Bureau of Aeronautics to review WHIRLWIND planning and design in detail, and BuShips dedcided subsequently to pattern the new codebreaking computer on WHIRLWIND’s architecture. Main differences were to be a 24-bit word length in the codebreaker’s computer rather than MIT’s 16-bit word, and use of a different memory type than WHIRLWIND’s electrostatic tube memory. [Snyder, p 6]

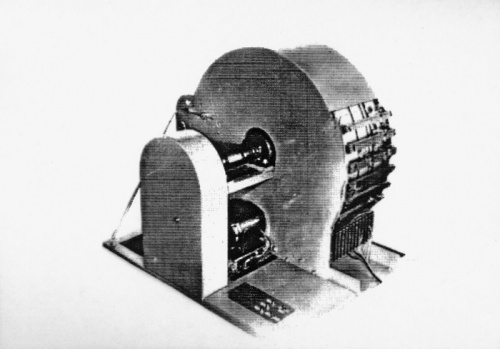

Even before the advent of the general purpose, stored program digital computer, various of the special purpose codebreaking devices that ERA built for the navy had need for temporary data storage in binary format. As early as 1946 the Bureau of Ships had issued a contract to ERA to investigate binary storage on rotating magnetic drums. Analog magnetic recording was not new, but the writing and reading of binary data onto moving magnetic memory was. ERA engineers began their magnetic drum memory experiments by applying a mixture of iron particles and glue onto a cylinder and then magnetizing spots of this material. They found that with a crude pickup head they could ‘read out’ the moving magnetic spots when the cylinder was rotated. Next they tried gluing strips of magnetic recording tape to a cylinder and achieved even better results. The main problem with this arrangement was curling up of the ends of the tapes where they met on the cylinder. Their best solution was a magnetic liquid obtained from nearby Minnesota Mining and Manufacturing (3M) company in Minneapolis. When the liquid was spray painted on a cylinder it gave a uniform, smooth coating and good binary recording and readout performance.

Other computer pioneers had built small demonstration computers using rotating magnetic drums, but both they and ERA faced the problem that in order to refresh the data on the drum, the entire store had to be erased before new data could be added. In 1948 ERA engineers devised circuitry that allowed selective erasing and writing on a rotating magnetic drum without erasing the entire memory store. In so doing they came up with a viable memory for a general purpose stored program digital computer. ERA memory drums would become an industry standard and found use in many other applications. For example IBM used ERA memory drums as secondary storage in their AN/FSQ-7 SAGE computers. The Special Applications Branch decided that Atlas would use a rotating magnetic memory drum as main memory. [Hakala Associates, pp 8-9]

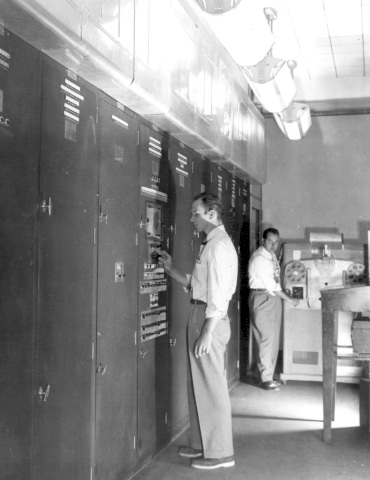

In March 1948 the Bureau of Ships authorized Engineering Research Associates to proceed with Atlas construction, under the review and guidance of the Computing Machine Laboratory. LCDR Svendsen and his men would have review and approval authority over every aspect of Atlas’ design, as well as managing a rigorous design change control system. The new machine was to be delivered to the CSAW facilities on Nebraska Avenue in Washington DC after acceptance by NCML engineers, and a four-year construction and test schedule was established; terminating on the date that Atlas would be up and running at CSAW.

About half of the twenty Computing Machine Laboratory military personnel were officers & warrant officers, and the other half were senior enlisted men, most of whom were chief petty officers. The warrant officers were specialists in electronics, the commissioned officers were engineering duty officers with masters degrees in electrical engineering, and the enlisted were rated in radio and radar electronics. All the navy personnel had been to sea in jobs involving use and maintenance of electronic equipment. All not only understood digital technology, but also knew the physical attributes they wanted to have embodied in the new computer, and they knew from their practical experience how to achieve them. They insisted upon high reliability, component accessibility, ease of maintenance, and good maintenance manuals. Instead of the point-to-point “sphaghetti wiring” which typified many of the experimental computers under construction, they required that the machine be built in modules ranging from modularized mainframe chassis units to plug-in electronics modules to facilitate quick removal and replacement with a good module while the failed module was taken to a shop for repair.

LCDR Svendsen required that the largest chassis modules had to be capable of fitting through a standard navy shipboard watertight door; just in case the computer had to be either installed or transported in a navy ship. They also emphasized provision of readily accessible test points and troubleshooting aids. NCML also tested every item of equipment before it was shipped to CSAW and they would not release it until they were satisfied with its performance. They also required their review and approval of all supporting documentation. The ERA engineers acknowledged that although they chafed under the navy’s strict security and equipment configuration management requirements, they did appreciate the navy’s design guidance and review. ERA became known as a company that built ‘finished’ equipment that worked when you turned it on. [Hakala Associates, pp 9-11]

A number of months before Atlas was to be delivered, the Bureau of Ships contracted with ERA to build a more powerful version of Atlas incorporating every known advance in the state of the art. This prompted the navy to redesignation the original Atlas as ‘Atlas I’ and the new machine Atlas II. It was to have a 36-bit word length, electrostatic memory as main working memory, a new, larger magnetic drum as secondary memory, and additional instructions in its instruction repertoire. It would also have more types of input/output peripheral equipment, including magnetic tape handlers, punched card equipment, and paper tape reader/punches. It would be a far more powerful machine than Atlas I. [Metropolis, N., Howlett, J., and Rota, Gian-Carlo, A History of Computing in the Twentieth Century, Academic Press, Inc., 1980, ISBN 0-12-491650-3, pp 491-492] [ Pugh, pp 32, 91-92, 115-117] [Snyder, p 8]

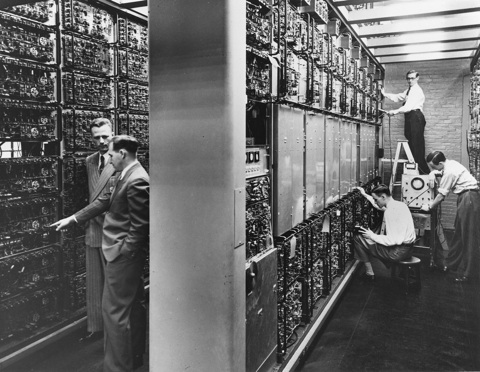

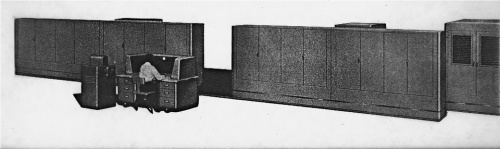

Atlas I final design called for 2,385 crystal diodes and 2,700 vacuum tubes. Its high speed rotating magnetic drum memory held 16,384 24-bit words. and the entire 17,400 pound computer occupied 400 square feet of floor space. ERA delivered Atlas I to CSAW in December 1950, and had its modules reassembled in the space of eight days. When Atlas I was connected to power and switched on it came up immediately with no faults. Navy cryptologists took over the machine the day it was switched on and began using it on a 24-hour day basis - with an allocation of ten percent of the time for scheduled preventive maintenance, which consisted primarily of preemptive tube replacement. During the machine’s first 500 hours of use, it was only necessary to shut it down for a total of 16 hours of nonscheduled repairs. ERA engineer Arnold Cohen stated in a later interview, “It’s my belief that Atlas I was the first American stored-program computer to be delivered - delivered in finished, working condition.” [Hakala Associates, p 9] [Metropolis, p 490]

Engineering Research Associates could see obvious commercial applications for Atlas I, and soon after its delivery and successful operation, the company sent a request to the Navy to build commercial versions of the machine. The navy approved the request with the requirement that ERA could never reveal the existence of the navy Atlas series of computers, or their application to codebreaking. In December 1951 ERA announced the availability of their new “1101” general purpose computer. Their commercial designation 1101 was the binary representation of the navy task 13 under which Atlas I had been built. No one in industry had known that ERA had been developing a large scale digital computer so the announcement made a few waves in the young computer industry. ERA offered only the sparest of input/output peripheral equipment and no operator or programming manuals, so it is not surprising they made no sales. They built only one 1101 and used it in their own facilities in Arlington, VA. The 1101 did become the start of 1100 series computers which ERA and its successor, Univac Division of Sperry Rand Corporation, successfully marketed for years. [Hakala Associates, p 15] [Snyder, p 8] [Metropolis, p 90]

Atlas II Gets Transistorized

In early 1951 the US Government opted to combine the armed services codebreaking activities into one organization, thus CSAW and the Army Security Agency were coalesced into the Armed Forces Security Agency (AFSA). Then in November 1952 this organization was absorbed into the newly formed National Security Agency (NSA). AFSA and then NSA both requested that the BuShips Special Applications Branch continue to serve as their computer acquisition agent until the requisite technical and contracting expertise was built up in their organizations. [Snyder, p 8]

In January 1951 Jay Forrester had published an article on magnetic core computer memories in Physical Review magazine which immediately captured the attention of Armed Forces Security Agency engineers; causing them to request the Bureau to task ERA with the design and construction of a second Atlas II using magnetic cores, vice electrostatic tubes, as main memory. The National Security Agency took delivery of the second Atlas II in November 1954. It was equipped with 36 planes of 32 by 32 magnetic core arrays. [Pugh, Emerson W., Memories that Shaped and Industry - Decisions Leading to the IBM System/360, the MIT Press, Cambridge, MA, 1984, ISBN 0-262-16094-3, pp 32,91] [Snyder, p 8]

In late 1951 LCDR Svendsen received orders for a three year tour at San Francisco Naval Shipyard where he was to learn more about the realities of naval ship construction and installations. By 1954 he had been promoted to Commander and had received orders to relocate to Washington, DC, to take over the Bureau of Ships Special Devices Branch which included the Computer Design Section at the Naval Security Station on Nebraska Avenue. Soon after his arrival, Svendsen received a request from the National Security Agency to acquire a new computer that would be radically different from anything he had ever developed before. NSA was highly desirous of having computer systems that gave cryptanalysts direct real-time access to computing facilities rather than their current process of submitting batch jobs to a computing center. They had considered two approaches for giving the analysts direct access: one was remote terminals connected to a central computer, and the other was to give each analyst his own “personal computer.”

Bell Laboratories had invented the transistor in 1947, and by 1954 transistors were beginning to make their way into some specialized electronic devices, although not in large numbers. NSA engineers were well aware of transistors and their potential to take the place of vacuum tubes as the active elements in electronics equipment. They also appreciated the ability of transistors to dramatically reduce the volume of electronic devices, and they opted to request the Special Devices Branch to develop a transistorized version of Atlas II, with magnetic core memory, for evaluation as a cryptanalyst’s desk sized personal computer. NSA gave the project the code name “SOLO.”

The original transistors invented at Bell Labs were called point contact transistors. Even though the Lab had invented an improved transistor in 1951, called a junction transistor, having better performance and reliability than point contact transistors, junction transistors were being made only in small quantities in late 1954. To get the number of transistors required by a full scale computer, Svendsen had no choice but to use point contact transistors, and he could find only one company that made the needed quantity with acceptable reliability. That company was Philco Corporation. To hedge his bets, Svendsen decided to not only have Philco provide the point contact transistors, but also contract with them to design and build the SOLO computer - under the technical guidance of the Naval Computing Machine Laboratory. The contract did specify that Philco would subcontract with Univac of St. Paul Minnesota (the former ERA) to provide SOLO’s magnetic core memory.

Building a device made of a few thousand point contact transistors, plus integrating the transistor circuits with magnetic core memory was a severe technical challenge. After three years of design development and test by Philco, in March 1958, NSA opted to take delivery of SOLO even though it was not yet fully operational. NSA engineers were going to try to get it functional; which they did after a year of tweaking. Even though they could make it run, they found it relatively unreliable and NSA ordered no more transistorized SOLO computers. The agency did operate the machine for a number of years, employing it mainly for programmer training and for testing other equipment. The greatest value of SOLO was probably the learning experience it provided. Philco Corporation also built a commercial version of SOLO which they designated TRANSAC S-1000. [Snyder pp 18-19]

Others were also making first attempts at building transistorized large scale computers. In February 1950 Sperry Rand Corp. had bought the Eckert-Mauchly Computer Corporation and made it their new Univac Division. Then in fall 1951 Sperry Rand had bought Engineering Research Associates and incorporated the former ERA also into the Univac Division; however the two components of the new division remained geographically collaboratively separate. About the same time that Philco began work on SOLO, the US Air Force and the former ERA part of the Univac Division entered into an independent research and development agreement to build a transistorized large scale computer.

Seymour Cray, a young former ERA engineer who had worked on the Atlas computer series, was assigned to design the transistor circuitry, resulting in a 24-bit word length machine having a 4000 word magnetic core memory. The new machine was named TRANSTEC and was completed in 1957. Even though TRANSTEC had the performance of a room-filling large scale vacuum tube computer, it occupied only 77.8 cubic feet and weighed only 800 pounds. It also had both greater speed and reliability than an equivalent tube-based computer. [Hakala Associates, p. 19] [Graf, R. W., Case Study of the Development of the Naval Tactical Data System, National Academy of Sciences, Committee on the Utilization of Scientific and Engineering Manpower, Jan. 29, 1964, p. V-2]

We have seen that by 1955 the Air Force SAGE system was solving the continental air defense data handling problem with a network of warehouse-filling vacuum tube based computer systems. At the same time, more than one organization was working on condensing the size of a full scale digital computer to something that could easily fit into the confines of a navy ship compartment through the use of transistors in lieu of vacuum tubes. The solution to the navy’s air defense data handling problem, which was overwhelming shipboard combat information center plotting teams, was close at hand. What it would take now was individuals who understood the navy’s problem, and who had the vision and insight to apply emerging technologies. In the next chapter we will continue to follow the careers of the two men who would solve the problem: Commanders Irvin L. McNally and Edward C. Svendsen. By clicking below the reader may proceed on to Chapter 3 of the Story of the Naval Tactical Data System titled "Mcnally's Challenge, Conceptualizing the Naval Tactical Data System."